Unit 2.3 Extracting Information from Data

Blog about Big Idea 2.3 and Hacks

- Files To Get

- Pandas and DataFrames

- Cleaning Data

- Extracting Info

- Create your own DataFrame

- Example of larger data set

- APIs are a Source for Writing Programs with Data

- Hacks

Files To Get

Save this file to your _notebooks folder

Save these files into a subfolder named files in your _notebooks folder

wget https://raw.githubusercontent.com/nighthawkcoders/APCSP/master/_notebooks/files/data.csv

wget https://raw.githubusercontent.com/nighthawkcoders/APCSP/master/_notebooks/files/grade.json

Save this image into a subfolder named images in your _notebooks folder

wget https://raw.githubusercontent.com/nighthawkcoders/APCSP/master/_notebooks/images/table_dataframe.png

Pandas and DataFrames

In this lesson we will be exploring data analysis using Pandas.

- College Board talks about ideas like

- Tools. "the ability to process data depends on users capabilities and their tools"

- Combining Data. "combine county data sets"

- Status on Data"determining the artist with the greatest attendance during a particular month"

- Data poses challenge. "the need to clean data", "incomplete data"

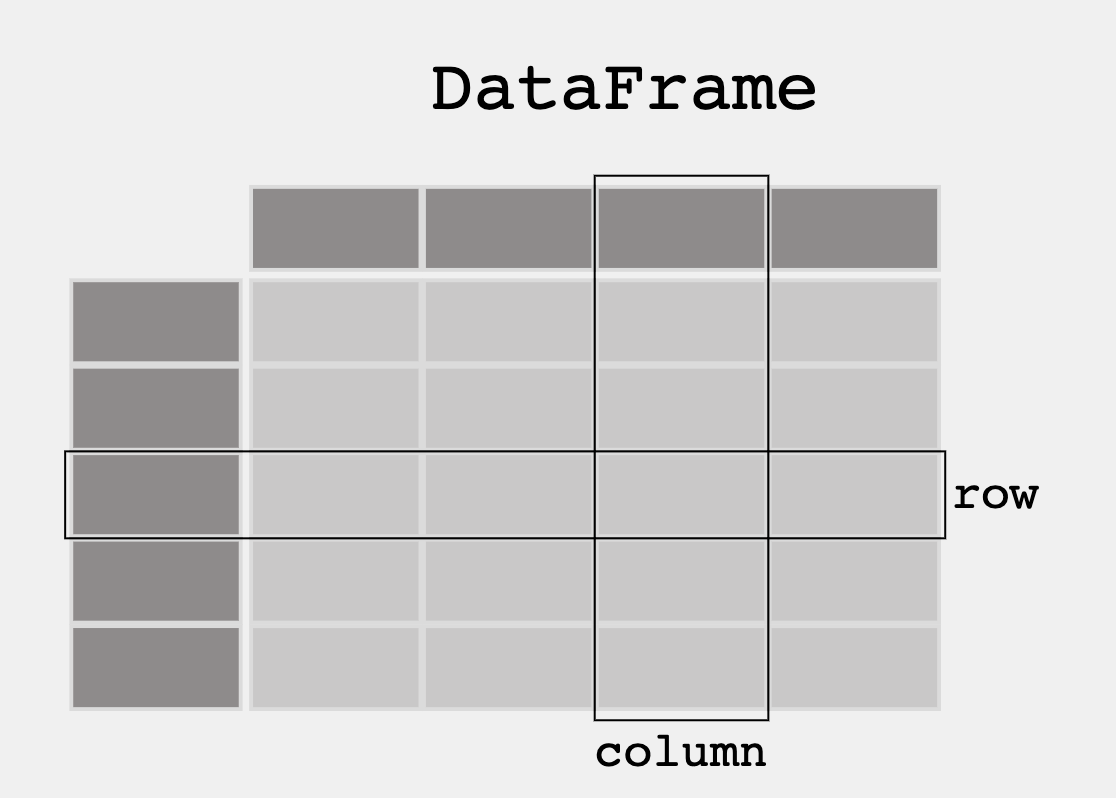

- From Pandas Overview -- When working with tabular data, such as data stored in spreadsheets or databases, pandas is the right tool for you. pandas will help you to explore, clean, and process your data. In pandas, a data table is called a DataFrame.

'''Pandas is used to gather data sets through its DataFrames implementation'''

import pandas as pd

Cleaning Data

When looking at a data set, check to see what data needs to be cleaned. Examples include:

- Missing Data Points

- Invalid Data

- Inaccurate Data

Run the following code to see what needs to be cleaned

Prints everything inside grade.json within files like a table.

Has a number 0 - 11 of all students, with each students' ID, year in school, and GPA.

The data outputted is somewhat confusing and hard to read because there are no boundaries.

df = pd.read_json('files/grade.json')

print(df)

# What part of the data set needs to be cleaned?

# From PBL learning, what is a good time to clean data? Hint, remember Garbage in, Garbage out?

print(df[['GPA']])

print()

#try two columns and remove the index from print statement

print(df[['Student ID','GPA']].to_string(index=False))

print(df.sort_values(by=['GPA']))

print()

#sort the values in reverse order

print(df.sort_values(by=['GPA'], ascending=False))

print(df[df.GPA > 3.00])

print(df[df.GPA == df.GPA.max()])

print()

print(df[df.GPA == df.GPA.min()])

import pandas as pd

#the data can be stored as a python dictionary

dict = {

"calories": [420, 380, 390],

"duration": [50, 40, 45]

}

#stores the data in a data frame

print("-------------Dict_to_DF------------------")

df = pd.DataFrame(dict)

print(df)

print("----------Dict_to_DF_labels--------------")

#or with the index argument, you can label rows.

df = pd.DataFrame(dict, index = ["day1", "day2", "day3"])

print(df)

print("-------Examine Selected Rows---------")

#use a list for multiple labels:

print(df.loc[["day1", "day3"]])

#refer to the row index:

print("--------Examine Single Row-----------")

print(df.loc["day1"])

print(df.info())

import pandas as pd

#read csv and sort 'Duration' largest to smallest

df = pd.read_csv('files/data.csv').sort_values(by=['Duration'], ascending=False)

print("--Duration Top 10---------")

print(df.head(10))

print("--Duration Bottom 10------")

print(df.tail(10))

APIs are a Source for Writing Programs with Data

3rd Party APIs are a great source for creating Pandas Data Frames.

- Data can be fetched and resulting json can be placed into a Data Frame

- Observe output, this looks very similar to a Database

This uses an API from an endpoint to collect data - covid API from APCSP website

The data is fetched into a JSON file and the first 6 rows are printed.

The first 3 columns are also outputted:- Country_name- Cases

- Deaths

'''Pandas can be used to analyze data'''

import pandas as pd

import requests

def fetch():

'''Obtain data from an endpoint'''

url = "https://flask.nighthawkcodingsociety.com/api/covid/"

fetch = requests.get(url)

json = fetch.json()

# filter data for requirement

df = pd.DataFrame(json['countries_stat']) # filter endpoint for country stats

print(df.loc[0:5, 'country_name':'deaths']) # show row 0 through 5 and columns country_name through deaths

fetch()

Hacks

Early Seed award

- Add this Blog to you own Blogging site.

- Have all lecture files saved to your files directory before Tech Talk starts. Have data.csv open in vscode. Don't tell anyone. Show to Teacher.

AP Prep

- Add this Blog to you own Blogging site. In the Blog add notes and observations on each code cell.

- In blog add College Board practice problems for 2.3.

The next 4 weeks, Teachers want you to improve your understanding of data. Look at the blog and others on Unit 2. Your intention is to find some things to differentiate your individual College Board project.

-

Create or Find your own dataset. The suggestion is to use a JSON file, integrating with your PBL project would be Amazing.

-

When choosing a data set, think about the following...

- Does it have a good sample size?

- Is there bias in the data?

- Does the data set need to be cleaned?

- What is the purpose of the data set?

- ...

-

Continue this Blog using Pandas extract info from that dataset (ex. max, min, mean, median, mode, etc.)

Hack Helpers

Here is how Mort started on this assignment by asking ChatGPT ... Regarding Python Pandas, what are some data sets that would be good for learning Pandas?

-

There are many data sets that are suitable for learning pandas, depending on your interests and the skills you want to develop.

Here are some suggestions...

- Titanic Dataset:This is a classic dataset for data analysis and machine learning, and is often used as an introduction to pandas. The dataset contains information about passengers on the Titanic, including their demographics, ticket class, and survival status.

-

Iris Dataset: This is another classic dataset that is often used in machine learning and data analysis courses. The dataset contains information about iris flowers, including their sepal length, sepal width, petal length, and petal width.

-

NBA Player Stats: This dataset contains information about NBA players, including their stats for various games, their positions, and their salaries. It is a great dataset for learning how to clean and manipulate data using pandas.

-

Housing Prices Dataset: This dataset contains information about housing prices in a particular city or region, including variables such as the number of bedrooms, square footage, and price. It is a great dataset for learning how to manipulate and analyze numerical data using pandas.

-

COVID-19 Dataset: This dataset contains information about the COVID-19 pandemic, including the number of cases, deaths, and recoveries in various countries and regions. It is a great dataset for learning how to manipulate and analyze time-series data using pandas.

-

World Bank Dataset: This dataset contains information about economic indicators from various countries, such as GDP, population, and poverty rates. It is a great dataset for learning how to manipulate and analyze large datasets using pandas.

-

These are just a few examples of the many datasets that are suitable for learning pandas. The best dataset for you will depend on your interests and the specific skills you want to develop.

Follow up question, "where can I find Titanic data set?"

-

The Titanic dataset is a popular dataset for data analysis and machine learning, and it can be found on various websites and data repositories. Here are a few places where you can find the Titanic dataset...

- Kaggle:The Titanic dataset is available on Kaggle, which is a popular platform for data scientists and machine learning enthusiasts. You can download the dataset from the Kaggle website after creating an account.

-

UCI Machine Learning Repository: The Titanic dataset is also available on the UCI Machine Learning Repository, which is a collection of datasets that are commonly used for machine learning research. You can download the dataset from the UCI Machine Learning Repository website.

-

Seaborn library: If you have the Seaborn library installed in your Python environment, you can load the Titanic dataset directly from the library using the following code:

import seaborn as sns titanic_data = sns.load_dataset('titanic')

import seaborn as sns

# Load the titanic dataset

titanic_data = sns.load_dataset('titanic')

print("Titanic Data")

print(titanic_data.columns) # titanic data set

print(titanic_data[['survived','pclass', 'sex', 'age', 'sibsp', 'parch', 'class', 'fare', 'embark_town']]) # look at selected columns

Use Pandas to clean the data. Most analysis, like Machine Learning or even Pandas in general like data to be in standardized format. This is called 'Training' or 'Cleaning' data.

The result of 'Training' data is making it easier to analyze or make conclusions. In looking at the Titanic, as you clean you would probably want to make assumptions on likely chance of survival.

This would involve analyzing various factors (such as age, gender, class, etc.) that may have affected a person's chances of survival, and using that information to make predictions about whether an individual would have survived or not.

-

Data description:- Survival - Survival (0 = No; 1 = Yes). Not included in test.csv file. - Pclass - Passenger Class (1 = 1st; 2 = 2nd; 3 = 3rd)

- Name - Name

- Sex - Sex

- Age - Age

- Sibsp - Number of Siblings/Spouses Aboard

- Parch - Number of Parents/Children Aboard

- Ticket - Ticket Number

- Fare - Passenger Fare

- Cabin - Cabin

- Embarked - Port of Embarkation (C = Cherbourg; Q = Queenstown; S = Southampton)

-

Perished Mean/Average

print(titanic_data.query("survived == 0").mean())

- Survived Mean/Average

print(td.query("survived == 1").mean())

Survived Max and Min Stats

print(td.query("survived == 1").max())

print(td.query("survived == 1").min())

import pandas as pd

df = pd.read_json('files/track.json')

print(df)

print("Fastest 800 M times from table in order")

print(df[['Name', '800 M']].sort_values(by=['800 M']))

print("Fastest 1600 M times from table in order")

print(df[['Name','1600 M']].sort_values(by=['1600 M']))

print("Fastest 3200 M times from table in order")

print(df[['Name', '3200 M']].sort_values(by=['3200 M']))

print("Fastest 3 Mile times from table in order")

print(df[['Name', '3 Mile']].sort_values(by=['3 Mile']))

Collegeboard Practice Problems

Q: Upon compiling the data, the researcher identifies a problem due to the fact that neither data source uses a unique ID number for each student. Which of the following best describes the problem caused by the lack of unique ID numbers?

A: Students who have the same name may be confused with each other.

Q: A team of researchers wants to create a program to analyze the amount of pollution reported in roughly 3,000 counties across the United States. The program is intended to combine county data sets and then process the data. Which of the following is most likely to be a challenge in creating the program?

A: Different counties may organize data in different ways.

Q: A student is creating a Web site that is intended to display information about a city based on a city name that a user enters in a text field. Which of the following are likely to be challenges associated with processing city names that users might provide as input?

A1: Users might enter abbreviations for the names of cities.

A2: Users might misspell the name of the city.

Q: Which of the following additional pieces of information would be most useful in determining the artist with the greatest attendance during a particular month?

A: Average ticket price

Q: A camera mounted on the dashboard of a car captures an image of the view from the driver’s seat every second. Each image is stored as data. Along with each image, the camera also captures and stores the car’s speed, the date and time, and the car’s GPS location as metadata. Which of the following can best be determined using only the data and none of the metadata?

A: The number of bicycles the car passed on a particular day

Q: Which of the following questions about the students who responded to the survey can the teacher answer by analyzing the survey results?

- I. Do students who enjoy the subject material tend to spend more time on homework each night than the other students do?

- II. Do students who spend more time on homework each night tend to spend less time studying for tests than the other students do?

- III. Do students who spend more time studying for tests tend to earn higher grades in the class than the other students do?

A: I and II